コネクタープライベートネットワーキングの紹介 : 今後のウェビナーに参加しましょう!

リアルタイムのデータにはリアルタイムの処理が必要

データがひとたび躍動し始めたら、次はそれを深く理解することが重要です。ストリーム処理でデータストリームから即座にインサイトを引き出せるようになりますが、それに対応するインフラストラクチャのセットアップは容易ではありません。Confluent がストリーム処理アプリケーション専用のデータベース ksqlDB を開発した理由はまさにそこにあります。

Confluent Cloud for Apache Flink® の発表

業界唯一のクラウドネイティブなサーバーレス Flink サービスでストリーム処理を簡単に活用

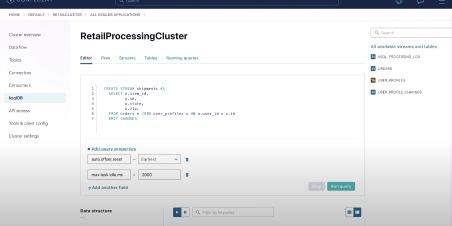

わずか数件の SQL ステートメントでリアルタイムのデータストリームを瞬時に処理

休止している保存データ(Data at rest)でなく躍動するデータ(Data in motion)を処理することでリアルタイムの価値を創出

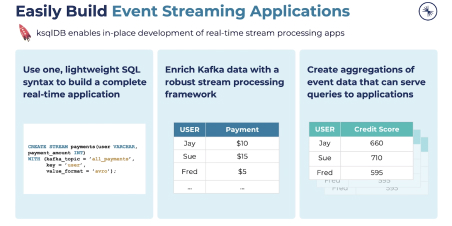

ビジネス全体で発生するデータの流れを継続的に処理することで、データの即時実用化を可能に。ksqlDB の直感的な構文で Kafka 内のデータにすばやくアクセスして拡張できるようになるため、開発チームがリアルタイムかつ革新的な顧客体験をシームレスに創出し、データドリブンの業務ニーズを満たせる環境が実現します。

ストリーム処理アーキテクチャを簡素化

ksqlDB なら、データストリームを収集して充実化し、新たに派生したストリームやテーブルに対でクエリを提供する単一のソリューションが提供されるため、デプロイ、維持、拡張、セキュリティ確保のためのインフラを簡素化することができます。データアーキテクチャに不確実要素が少なくなり、本当に重要なイノベーションの創出に集中できるようになります。

簡単な SQL 構文でリアルタイムアプリケーションの構築を開始

使い慣れた軽量の SQL 構文を使って、従来のアプリをリレーショナルデータベースに構築するのと同等の手軽さでリアルタイムアプリケーションを構築できます。Kafka Streams と ksqlDB にはどんな違いがあるのでしょうか。ksqlDB は、リアルタイムのデータストリームの充実化、変化や処理のための軽量かつ強力な Java ライブラリ、Kafka Streams 上に構築されています。Kafka Streams をコアに据えることで、巧みに設計され、理解しやすい抽象レイヤーの上に ksqlDB を構築することができ、初心者から上級者まで、楽しくわかりやすい方法で Kafka の力を簡単に引き出し、存分に活用することができるのです。

シンプルなアーキテクチャ、先進的な機能性

プッシュとプルクエリ

新規イベントの発生 (プッシュクエリ) やポイントインタイムでの結果参照 (プルクエリ) の形で変化するクエリ結果を継続的にサブスクライブしてテーブルやストリームへクエリすることで、それぞれに対応するシステムを別途統合する必要がなくなります。

完全マネージド型のクラウドホスティング

Confluent Cloud の完全マネージド型サービスの活用で、ksqlDB インフラストラクチャの自社運用の手間を排除。セルフサービスのプロビジョニングや、インプレースアップグレード、99.9% の稼働率保証で、クラスタ管理の手間なく実用的なアプリケーション機能の構築に集中できるようになります。

ユーザー定義の機能

ユースケースに応じたカスタム関数で ksqlDB を拡張可能。Java を利用して独自のデータ処理ロジックを表現し、便利なフックで ksqlDB エンジンに公開することができます。

組み込みのコネクター

既存システムのデータストリームを ksqlDB との間で手軽に移動可能。ksqlDB では、イベントキャプチャのために別の Kafka Connect クラスタを実行することなく、事前構築済みのコネクターをサーバー上で直接実行できます。

業界最高水準のセキュリティ

ロールベースアクセス制御、監査ログ、機密性の保持で、データを安全に保護しながら ksqlDB を活用。Confluent は、セキュリティを念頭に置いた製品設計で ksqlDB の安全性をデフォルトで実現しています。

エンタープライズレベルのサポート

専門家によるガイダンスを24/7で利用可能。問題の解決や不具合の修正もスピーディに。Confluent のエキスパートが、Confluent ksqlDB 関連のニーズに加え、Data in Motion プラットフォーム全体のあらゆるニーズをサポートします。

利用を開始しますか?

ksqlDB の利用開始は簡単です。今すぐ登録して、デモや実践型ワークショップに参加してみましょう。

ライブデモに参加

ksqlDB を無料で試す

バーチャル実践型ラボ

無料の ksqlDB 101 コース

その他のリソース

Confluent を使ったストリーム処理アプリケーションの構築

インフラストラクチャ不要のストリーミングアプリケーション

Apache Kafka を使用して MongoDB から Snowflake へのストリーミング ETL パイプラインを開発

ドキュメント : ksqlDB

Confluent の学習を継続

2021年の Apache Kafka® の実行 : クラウドネイティブサービスについて知る電子ブック

Confluent Cloud がどのようにアプリ開発をスピードアップし、人的リソースと予算を解放するかをチェックしましょう。

Confluent が Apache Kafka® を補完する方法 eブック

Confluent が完全かつ安全なエンタープライズ級の Kafka ディストリビューションを提供する方法をご覧ください。

Confluent の Connector ポートフォリオでビジネスを最新化

専門家が認定した120点以上のプレビルド型 Connector を使って、躍動するデータをより迅速、安全、確実に接続する方法を学びましょう。